Crawlkit

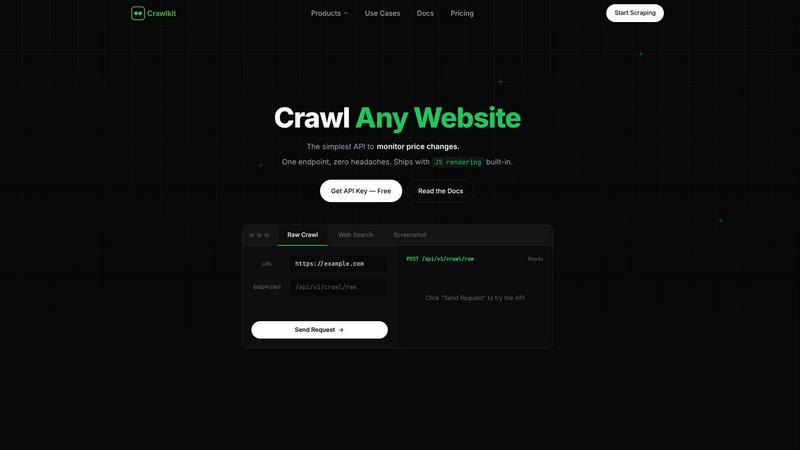

CrawlKit is an API-first web scraping platform that effortlessly extracts structured data from any website with a.

About Crawlkit

CrawlKit is an innovative web data extraction platform designed specifically for developers and data teams who require a dependable and scalable solution for gathering web data without the burdens of managing complex scraping infrastructures. In today's fast-paced digital landscape, users encounter a range of challenges such as rotating proxies, headless browsers, anti-bot protections, and frequent website changes that complicate the scraping process. CrawlKit addresses these issues by streamlining data collection, allowing users to focus on leveraging the extracted data rather than the intricacies involved in its acquisition. With just a simple API request, users can efficiently manage proxy rotation, browser rendering, retries, and bypass blocks, making it easier to obtain structured data from various sources like LinkedIn, Instagram, and app stores. The platform supports multiple data types through a consistent interface, providing raw page content, search results, visual snapshots, and professional data, thus catering to a diverse range of data needs.

Features of Crawlkit

Simplified Data Extraction

CrawlKit offers a straightforward API that enables users to extract structured data from any website or platform with a single API call. This eliminates the need for complex setups and allows developers to focus on their projects rather than the technical details of web scraping.

Robust Proxy Management

One of the standout features of CrawlKit is its built-in proxy management system. The platform automatically handles proxy rotation, ensuring that users can bypass restrictions and avoid rate limits while collecting data. This allows for uninterrupted data extraction, even from websites with stringent anti-bot measures.

Comprehensive Data Types

CrawlKit supports a wide range of data types, enabling users to extract various forms of information including company data, social media profiles, app reviews, and more. This versatility allows teams to utilize the platform across different projects and industries, making it a comprehensive solution for data collection.

Reliable Output Quality

CrawlKit ensures that users receive complete and accurate data by waiting for full page loads and validating responses before sending them. This means that users can trust the data they receive, avoiding the pitfalls of partial or broken outputs that can occur with other scraping tools.

Use Cases of Crawlkit

CRM Enrichment

CrawlKit can be utilized to enrich customer relationship management (CRM) systems by automatically pulling LinkedIn profile data. This includes gathering job titles, company information, and contact details for every lead, enhancing the quality of sales outreach and customer insights.

Social Media Monitoring

For businesses aiming to track their competitors, CrawlKit provides an effective solution for monitoring social media growth. Users can track metrics such as follower counts, engagement rates, and top-performing posts on platforms like Instagram, allowing for informed marketing strategies.

App Review Analysis

CrawlKit excels in aggregating app reviews from various app stores. This use case allows businesses to analyze user feedback and ratings for their applications, providing valuable insights that can be used for product improvement and customer satisfaction.

Market Research

CrawlKit is a powerful tool for conducting market research by extracting data from various online sources. Businesses can gather insights on industry trends, competitor offerings, and consumer behavior, enabling them to make informed strategic decisions based on comprehensive data analysis.

Frequently Asked Questions

What types of data can I extract using Crawlkit?

CrawlKit allows users to extract a variety of data types including company information, social media profiles, app reviews, and search results. This versatility makes it suitable for a range of applications across different industries.

Is there a limit to the number of API calls I can make?

CrawlKit operates on a credit-based pricing model, allowing users to make as many API calls as their credits allow. There are no monthly commitments or rate limits, providing users with flexibility in their data extraction efforts.

How does Crawlkit handle anti-bot protections?

CrawlKit is designed to manage anti-bot protections effectively. It automatically rotates proxies, handles browser rendering, and implements retries, ensuring that users can bypass blocks and collect data without interruptions.

Can I integrate Crawlkit with my existing tools?

Yes, CrawlKit is a simple HTTP API that works with any programming language or automation tool. This compatibility allows users to integrate it seamlessly into their existing workflows without vendor lock-in or restrictions.

Explore more in this category:

Similar to Crawlkit

Subiq

Subiq simplifies SaaS subscription management for small teams, helping you track tools, manage spending, and avoid unnecessary costs.

Toon Tone

Toon Tone is a free daily browser game that challenges your color memory by guessing original cartoon character hues using HSB sliders across five.

FX Radar

FX Radar delivers real-time forex news in seconds with AI-powered analysis and a professional trading journal for comprehensive performance tracking.

GhostlyX Privacy-First Web Analytics

GhostlyX is a privacy-first web analytics tool that provides actionable insights without compromising user data, ensuring compliance and transparency.

Microplastic Intake App

Get science-backed insights into your daily microplastic intake from food, drinks, and air to track and reduce your exposure.

Webleadr

Webleadr helps you effortlessly find and contact web design leads, including businesses without websites, in just a few clicks.

TubeAnalytics

TubeAnalytics empowers YouTube creators with AI-driven insights and real-time analytics to enhance growth, revenue, and audience engagement.

Metric Nexus

Metric Nexus centralizes your marketing data, allowing you to effortlessly analyze performance and ask questions in plain English with Claude.