CloudBurn vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

CloudBurn

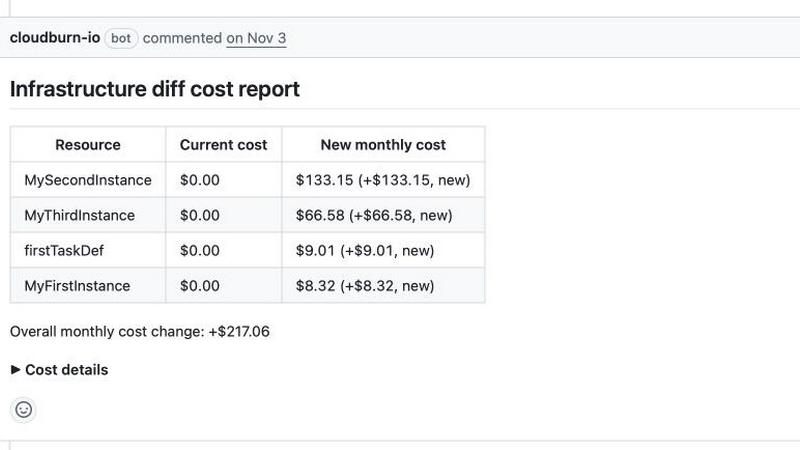

CloudBurn shows AWS cost estimates in pull requests to prevent expensive infrastructure mistakes.

Last updated: February 28, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

CloudBurn

OpenMark AI

Overview

About CloudBurn

CloudBurn is a pioneering FinOps platform that fundamentally redefines how engineering organizations manage and control their cloud expenditures. It is engineered to shift cloud cost governance left, embedding financial accountability directly into the earliest stages of the software development lifecycle. The platform is specifically designed for modern engineering teams that leverage Infrastructure-as-Code (IaC) frameworks such as Terraform and AWS CDK. Its core mission is to eliminate the all-too-common and financially damaging problem of surprise AWS bills by providing precise, real-time, pre-deployment cost intelligence. Traditional cloud financial management operates reactively, often leaving teams to discover budgetary overruns weeks after deployment, when resources are already provisioned and accruing charges. CloudBurn disrupts this outdated paradigm by integrating seamlessly into the existing code review and CI/CD workflow. It automatically analyzes pull requests containing infrastructure changes, calculates the exact monthly cost impact using live, up-to-date AWS pricing data, and posts a comprehensive, actionable report as a comment. This empowers developers, platform engineers, and FinOps practitioners to engage in informed, data-driven discussions about cost efficiency during the design and review phase, when architectural changes are trivial and free. By proactively catching expensive misconfigurations before they ever reach production, CloudBurn transforms cloud cost from a post-deployment shock into a first-class, actionable metric for every infrastructure decision. This fosters a sustainable culture of cost-aware development and delivers immediate, measurable return on investment by preventing wasteful spending before it occurs.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.