Agenta vs Requestly

Side-by-side comparison to help you choose the right tool.

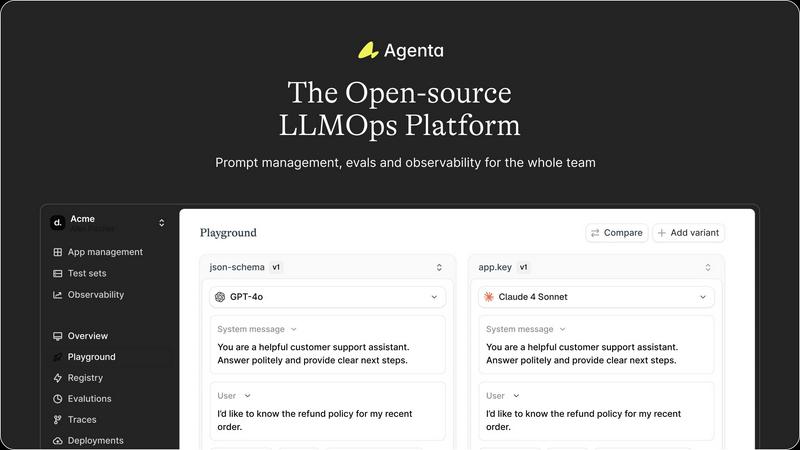

Agenta is the open-source platform that helps teams build and manage reliable AI applications together.

Last updated: March 1, 2026

Requestly is a lightweight, git-native API client that enables effortless testing and collaboration without requiring a login.

Last updated: April 4, 2026

Visual Comparison

Agenta

Requestly

Feature Comparison

Agenta

Unified Playground for Experimentation

Agenta provides a centralized playground where teams can experiment with different prompts, models, and parameters side-by-side in a single interface. This eliminates the need for scattered tools and documents, allowing for direct comparison and rapid iteration. Foundational to its design is complete version history for all prompts, ensuring every change is tracked and reversible, fostering a systematic approach to development rather than ad-hoc "vibe testing."

Comprehensive Evaluation Framework

The platform replaces guesswork with evidence through a robust evaluation system. Teams can create automated test suites using LLM-as-a-judge, custom code evaluators, or built-in metrics. Crucially, Agenta enables evaluation of full agentic traces, assessing each intermediate reasoning step, not just the final output. It also seamlessly integrates human evaluation workflows, allowing domain experts and product managers to provide qualitative feedback directly within the platform.

Production Observability and Debugging

Agenta offers deep observability by tracing every LLM request in production, making it possible to pinpoint exact failure points when issues arise. Teams can annotate these traces collaboratively and, with a single click, turn any problematic trace into a test case for the playground, closing the feedback loop. This capability is augmented by live monitoring to detect performance regressions and gather real user feedback.

Collaborative Workflow for Cross-Functional Teams

Designed as a single source of truth, Agenta breaks down silos between developers, product managers, and domain experts. It provides a safe, code-free UI for experts to edit and experiment with prompts. The platform ensures full parity between its API and UI, enabling both programmatic and manual workflows to integrate into one central hub, empowering the entire team to participate in experiments, evaluations, and debugging.

Requestly

Git-Native API Collections

Requestly redefines API management by treating collections as local files that seamlessly integrate with Git. This allows developers to version control their API specs, track changes through commits, review modifications via pull requests, and collaborate with teammates using the same robust workflows they use for their source code. This feature eliminates the lock-in and sync issues common with cloud-only platforms, ensuring your API definitions are portable, auditable, and always under your control.

Local-First & Login-Free Operation

Prioritizing developer privacy and immediate productivity, Requestly operates on a local-first paradigm. All your data, including collections, environments, and logs, is stored securely on your local device. This architecture not only enhances security and performance but also means you can start using the application instantly without any sign-up process. There is no dependency on a central cloud for core functionality, giving you uninterrupted access and peace of mind regarding data ownership.

Advanced REST & GraphQL Client

Requestly provides a comprehensive playground for testing both REST and GraphQL endpoints. For REST, it supports all standard methods, custom headers, and body types. For GraphQL, it includes a sophisticated client with schema introspection, which automatically fetches and understands your GraphQL schema to provide intelligent auto-completion, query validation, and documentation lookup directly within the editor, dramatically speeding up API exploration and testing.

Free Team Collaboration Workspaces

Unlike many competitors that lock collaboration behind paywalls, Requestly offers powerful team features for free. Users can create shared workspaces to organize and manage APIs collectively. These workspaces include role-based access control (RBAC), allowing you to assign Admin, Editor, or Viewer permissions to team members, ensuring secure and organized teamwork without any subscription cost.

Use Cases

Agenta

Streamlining Enterprise LLM Application Development

Large organizations developing customer-facing AI assistants or internal copilots use Agenta to bring structure to their development process. It enables cross-functional teams to collaborate efficiently, moving from disjointed prototyping in Slack and sheets to a governed lifecycle with version control, systematic evaluation against business metrics, and smooth handoff from experimentation to stable, observable deployment.

Implementing Rigorous AI Quality Assurance

Teams that require high reliability and consistency, such as those in legal, financial, or healthcare sectors, leverage Agenta to build a rigorous QA pipeline for their LLM applications. They use the platform to create comprehensive evaluation datasets, run automated and human-in-the-loop evaluations on every proposed change, and monitor production performance to ensure no regressions slip through, thereby building evidence-based trust in their AI systems.

Debugging and Optimizing Complex AI Agents

Developers building sophisticated multi-step agents with frameworks like LangChain use Agenta's observability features to debug complex failures. By examining detailed traces of each step in an agent's reasoning, teams can quickly identify where a chain fails, save those instances as tests, and iteratively refine prompts and logic in the playground until robustness is achieved.

Enabling Domain Expert Collaboration

Companies where subject matter experts (e.g., doctors, lawyers, analysts) are crucial for validating AI output use Agenta to democratize the development process. The platform's intuitive UI allows these non-technical experts to directly participate in prompt engineering, run evaluations, and provide annotated feedback on real production traces, ensuring the AI aligns closely with specialized domain knowledge.

Requestly

Migrating from Legacy API Clients

Teams looking to escape bloated, slow, or restrictive API platforms can use Requestly as their modern, lightweight successor. The one-click import feature allows for effortless migration of collections, environments, and scripts from tools like Postman. Combined with local storage and Git integration, this provides a seamless transition to a faster, more controlled, and developer-friendly API workflow.

Secure Enterprise API Development

Enterprises with strict data security and compliance requirements can leverage Requestly's local-first model and enterprise-ready features. By keeping sensitive API data on-premise or within controlled local environments and utilizing features like SOC-II compliance frameworks, SSO integration, and detailed audit logs, development teams can maintain high productivity without compromising on corporate security policies and data governance.

CI/CD Pipeline Integration

Development and DevOps teams can integrate Requestly's Git-native collections directly into their Continuous Integration and Delivery pipelines. Since collections are stored as files in a repository, automated scripts can run API tests, validate contracts, or deploy mock servers as part of the build process. This ensures API reliability is continuously verified alongside code changes, promoting higher software quality.

Collaborative API Design and Testing

API teams, including frontend and backend developers, can use shared workspaces to collaborate in real-time on API design and testing. Backend developers can publish endpoints, while frontend developers can immediately start building and testing against them using environment variables and pre-request scripts. This parallel workflow, facilitated by free collaboration tools, reduces development cycles and improves team alignment.

Overview

About Agenta

Agenta is an open-source LLMOps platform engineered to solve the fundamental challenge of building reliable, production-grade applications with large language models. It serves as a unified operating system for AI development teams, bridging the critical gap between experimental prototyping and stable deployment. The platform is designed for collaborative teams comprising developers, product managers, and subject matter experts who need to move beyond scattered, ad-hoc workflows. Its core value proposition lies in centralizing the entire LLM application lifecycle—from prompt experimentation and rigorous evaluation to comprehensive observability—into a single, coherent platform. By replacing guesswork with evidence-based processes, Agenta empowers organizations to systematically iterate on prompts, validate changes against automated and human evaluations, and swiftly debug issues using real production data. It is model-agnostic and framework-friendly, integrating seamlessly with popular tools like LangChain and LlamaIndex, thereby preventing vendor lock-in and providing the essential infrastructure to implement LLMOps best practices at scale. Agenta transforms the chaotic process of AI development into a structured, collaborative, and data-driven discipline.

About Requestly

Requestly is a modern, developer-centric API client engineered to provide development teams with unparalleled control, security, and efficiency in their API workflows. It stands as a powerful alternative to traditional, often bloated, cloud-based API platforms by championing a local-first architecture. This foundational principle ensures that all your sensitive API collections, environment variables, and request history are stored directly on your machine, not on external servers, giving you complete data sovereignty and enhanced privacy. Designed for teams that value seamless integration into their existing development lifecycle, Requestly is inherently Git-native. It allows API collections to be stored as simple, version-controllable files, enabling collaboration through the same familiar Git workflows used for code.

The platform is built to accelerate the entire API development process, from design and testing to debugging and collaboration. It offers robust support for both REST and GraphQL APIs, featuring intelligent tools like schema introspection and auto-completion. Integrated AI capabilities assist in writing requests, generating tests, and debugging, significantly reducing manual effort. Furthermore, Requestly breaks down collaboration barriers with its generous free tier, offering shared workspaces and role-based access control at no cost. With no mandatory login required to start, it empowers over 300,000 developers from leading companies like Microsoft, Amazon, and Google to begin testing APIs instantly, making it the agile and trustworthy choice for modern development teams.

Frequently Asked Questions

Agenta FAQ

Is Agenta truly open-source?

Yes, Agenta is a fully open-source platform. The core codebase is publicly available on GitHub, allowing users to inspect, modify, and contribute to the software. This open model ensures transparency, prevents vendor lock-in, and allows the community to influence the product's roadmap while providing the freedom to self-host the platform.

How does Agenta integrate with existing AI stacks?

Agenta is designed to be model-agnostic and framework-friendly. It offers seamless integrations with popular LLM providers (like OpenAI), orchestration frameworks (such as LangChain and LlamaIndex), and can be extended with custom evaluators. This flexibility allows teams to incorporate Agenta into their existing workflows without disrupting their current toolchain.

Can non-technical team members really use Agenta effectively?

Absolutely. A key design principle of Agenta is to bridge the gap between technical and non-technical roles. Product managers and domain experts can use the web UI to experiment with prompts in the playground, configure and view evaluation results, and annotate production traces—all without writing a single line of code, fostering true collaborative development.

What is the difference between Agenta and simple prompt management tools?

While basic tools might help version prompts, Agenta provides a complete LLMOps lifecycle platform. It combines prompt management with integrated evaluation (automated and human), full production observability with trace debugging, and collaborative workflows. This holistic approach ensures that prompts are not just managed but are systematically improved, validated, and monitored within the context of the entire application.

Requestly FAQ

How does Requestly handle data privacy and security?

Requestly employs a local-first architecture, meaning your primary API data (collections, environment variables, request history) is stored directly on your computer and never leaves your machine unless you explicitly choose to sync it via Git or a shared workspace. This approach provides inherent security. For enterprise collaboration, Requestly offers additional security layers including data encryption, SOC-II compliance, SSO, and role-based access control to protect shared information.

Can I import my existing Postman collections into Requestly?

Yes, importing your work from Postman is a straightforward process. Requestly provides a dedicated one-click import feature that allows you to seamlessly bring in your complete Postman collections, including all requests, folders, environment variables, and pre-request/test scripts. This ensures a smooth and immediate transition without the need to manually recreate your API testing setup.

Is team collaboration really free in Requestly?

Absolutely. Requestly's free tier includes core collaboration features that many other API clients reserve for paid plans. You can create shared workspaces, invite an unlimited number of team members, and manage their permissions using role-based access control (Admin, Editor, Viewer) at no cost. This makes it an ideal tool for startups, open-source projects, and teams wanting to collaborate without budget constraints.

What makes Requestly "Git-native"?

Requestly is Git-native because it stores your API collections as plain text files (in JSON format) on your local filesystem. You can point these files to any Git repository (like GitHub, GitLab, or Bitbucket). This allows you to use standard Git commands for version control, branching, merging, and code review for your API specifications. Collaboration happens through Git workflows, making it a natural fit for developers.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to help development teams build and manage reliable AI applications. It falls into the category of development tools focused on the operational lifecycle of large language models, providing a unified system for experimentation, evaluation, and deployment. Users may explore alternatives for various reasons, including specific budget constraints, the need for different feature sets like advanced monitoring or native CI/CD integration, or a preference for a managed service over self-hosted open-source software. Organizational requirements around scalability, security compliance, and existing tech stack compatibility also drive the search for other solutions. When evaluating alternatives, key considerations should include the platform's approach to collaborative experimentation, the robustness of its evaluation and testing frameworks, and its observability capabilities for production applications. The ideal tool should align with your team's workflow, support the LLM frameworks you use, and provide a clear path from prototype to stable, monitored deployment.

Requestly Alternatives

Requestly is a modern API client that falls into the category of developer tools, specifically designed for efficient API testing and collaboration. It distinguishes itself with a local-first, git-based architecture that gives teams full control over their data and workflow versioning. Users often explore alternatives for various reasons, including specific budget constraints, the need for different feature sets like advanced monitoring or public API documentation, or a preference for cloud-centric versus local-first application models. Platform compatibility, such as operating system support or integration with certain CI/CD pipelines, also drives the search for other solutions. When evaluating alternatives, key considerations should include the core philosophy of data storage and security, the depth of collaboration features for team workflows, and the overall approach to streamlining the API development lifecycle. The ideal tool should align with your team's technical requirements, security policies, and preferred method of working, whether that's entirely cloud-based, self-hosted, or a hybrid model.