OpenMark AI vs qtrl.ai

Side-by-side comparison to help you choose the right tool.

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

qtrl.ai

qtrl.ai empowers QA teams to scale testing with AI while ensuring full control, governance, and seamless integration.

Last updated: March 4, 2026

Visual Comparison

OpenMark AI

qtrl.ai

Overview

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.

About qtrl.ai

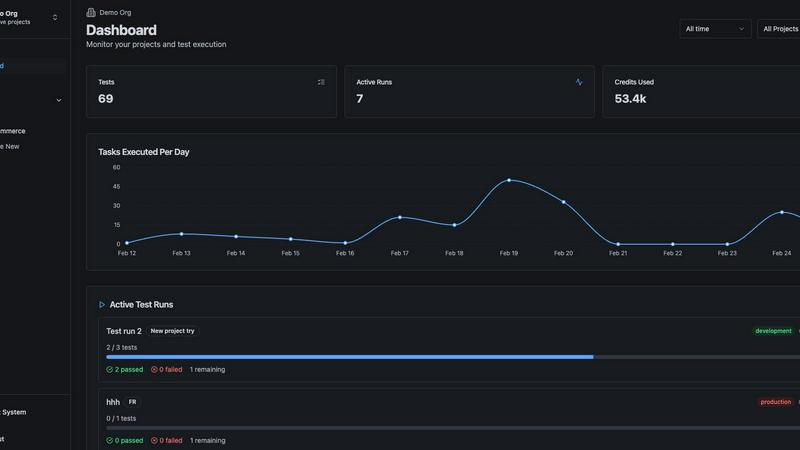

qtrl.ai is an innovative quality assurance platform designed to empower software teams by streamlining their QA processes while maintaining robust control and governance. This platform stands out by integrating enterprise-grade test management with advanced AI-driven automation, creating a centralized hub where teams can efficiently organize test cases, plan test runs, and track quality metrics through real-time dashboards. By providing clear visibility into testing outcomes, qtrl.ai enables engineering leads and QA managers to identify what has been tested, what is passing, and where potential risks may exist.

The unique strength of qtrl.ai lies in its gradual implementation of intelligent automation. Unlike other platforms that may adopt a risky black-box approach, qtrl allows teams to begin with manual test management, progressively introducing autonomous agents as they become ready. These agents can generate UI tests from simple English descriptions, adapt to application changes, and execute tests across various browsers and environments at scale. This makes qtrl.ai ideal for product-led engineering teams, QA groups transitioning from manual testing, organizations modernizing outdated workflows, and enterprises requiring stringent compliance and auditability. Ultimately, qtrl.ai aims to bridge the gap between the slow pace of manual testing and the complexities of traditional automation, offering a reliable pathway to faster and smarter quality assurance.