Mod vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

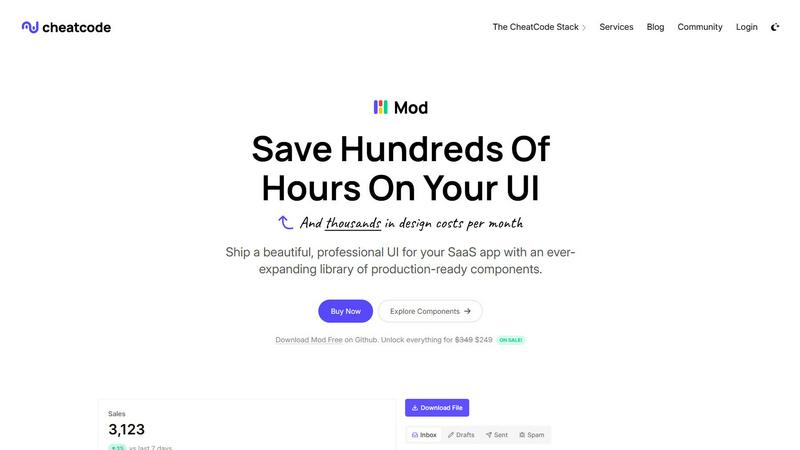

Mod is a CSS framework that accelerates SaaS development with a comprehensive library of ready to use UI components.

OpenMark AI instantly benchmarks over 100 AI models on your specific task to find the optimal balance of cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

Mod

OpenMark AI

Feature Comparison

Mod

Extensive Component Library

Mod provides an expansive suite of over 88 meticulously crafted, reusable UI components. This library covers the entire spectrum of interface needs for a SaaS product, including complex data tables, interactive forms, navigation bars, modals, cards, buttons, and feedback elements like alerts and toasts. Each component is built with accessibility and semantic HTML in mind, ensuring a solid foundation. This depth means developers rarely need to build common elements from scratch, instead assembling interfaces from reliable, pre-tested building blocks. The components are designed to work in harmony, guaranteeing visual consistency across your entire application without any extra effort.

Comprehensive Design System & Theming

Beyond individual components, Mod delivers a complete, coherent design system. This includes 168 distinct style utilities and two fully realized themes (light and dark) that can be applied globally. The system encompasses typography scales, a cohesive color palette with semantic meaning, consistent spacing units, and shadow elevations. The built-in, easy-to-implement dark mode support is a significant advantage for modern applications. This systematic approach ensures that every element you place adheres to the same visual rules, creating a professional and polished look that users instinctively trust, all without requiring a dedicated designer.

Framework Agnostic & Easy Integration

A standout feature of Mod is its complete independence from any specific JavaScript framework or meta-framework. It is crafted as pure, modern CSS with intuitive class names, allowing it to seamlessly integrate with Next.js, Nuxt, SvelteKit, Vite, Ruby on Rails, Django, PHP applications, and virtually any other web technology. This future-proofs your investment, as you can adopt Mod today and carry it forward through any stack migration. Integration is typically as simple as linking a CSS file or installing a package, meaning you can inject a complete design language into a new or existing project in minutes, not days.

Mobile-First & Fully Responsive

Every component and layout utility in Mod is constructed with a mobile-first, responsive philosophy at its core. The styles ensure that interfaces look and function flawlessly on devices of all sizes, from compact smartphones to large desktop monitors. Responsive behavior is baked in through intelligent use of CSS Grid, Flexbox, and responsive utility classes. This eliminates the need to write complex, custom media queries for common adjustments, allowing developers to build for all platforms simultaneously and ensuring a superior user experience regardless of how customers access your SaaS application.

OpenMark AI

Plain Language Task Benchmarking

OpenMark AI removes the barrier of technical complexity by allowing users to define their test scenarios using simple, descriptive language. You don't need to write complex scripts or structured prompts; you just describe what you want the AI to do, such as "extract dates and product names from customer service emails" or "generate three taglines for a new productivity app." The platform intelligently configures the benchmark, enabling rapid, iterative testing of your actual workflow without any coding required.

Multi-Model Comparison in One Session

The platform's core strength is its ability to run your described task against a massive selection of LLMs simultaneously. Instead of manually testing models one by one across different interfaces and dashboards, you launch a single benchmark job. OpenMark AI coordinates real API calls to all selected models, presenting the results in a unified dashboard for immediate, apples-to-apples comparison across quality scores, cost, and speed.

Variance and Stability Analysis

OpenMark AI provides deep insight into model reliability by running your task multiple times per model. This feature measures output consistency, showing you the variance in responses. It answers the critical question: "Will this model perform consistently when deployed at scale?" This focus on stability, beyond a single output, helps identify models that are robust and dependable versus those that are unpredictable.

Integrated Cost-Per-Request Calculation

Every benchmark includes precise, real-time calculation of the cost incurred for each API call to each model. This goes beyond listed token prices, showing you the actual expense of achieving a certain quality level for your specific task. This allows for true cost-efficiency analysis, helping you select a model that delivers the required performance at a sustainable operational cost, optimizing your AI budget effectively.

Use Cases

Mod

Rapid Prototyping and MVP Development

For entrepreneurs and startups, speed to market is critical. Mod is the perfect tool for rapidly prototyping ideas and building a Minimum Viable Product (MVP). With its vast component library, developers can assemble a fully functional, visually credible front-end in a fraction of the time it would take to design and code from zero. This allows founders to validate business hypotheses with real users quickly, gather feedback, and iterate without being bottlenecked by UI development, enabling a lean and efficient startup methodology.

Modernizing Legacy Application UIs

Many established businesses struggle with outdated, inconsistent user interfaces that harm user satisfaction and productivity. Mod provides a strategic path for incremental UI modernization. Teams can integrate Mod's CSS into specific sections or new features of a legacy application—be it Rails, Django, or a traditional server-rendered app—without a full, risky rewrite. This allows for a gradual, low-risk refresh that delivers immediate visual and UX improvements, boosting internal morale and customer perception while planning for larger architectural changes.

Standardizing Design Across Development Teams

In growing engineering organizations, inconsistent UI implementation across different teams and features is a common pain point. Adopting Mod as a company-wide design system standardizes the visual language and component implementation. It serves as a single source of truth for front-end styles, reducing design debt, eliminating repetitive discussions about pixels and colors, and ensuring a unified brand experience. This leads to more efficient collaboration, faster onboarding for new developers, and a consistently professional product.

Building Internal Tools and Admin Panels

The development of internal dashboards, admin panels, and operational tools often receives less design resources but still requires clarity, functionality, and efficiency. Mod is ideally suited for this use case. Developers can leverage the pre-built data tables, form controls, charts containers, and layout components to construct powerful internal interfaces quickly. The result is a tool that is not only highly functional but also pleasant and intuitive for employees to use, improving operational efficiency and reducing training time.

OpenMark AI

Pre-Deployment Model Selection for New Features

Development teams building a new AI-powered feature, such as a content summarizer or a customer support chatbot, can use OpenMark to empirically determine the best foundational model. By benchmarking prototypes of their exact task, they can select the optimal model based on a combination of accuracy, response time, and cost before committing to an integration, reducing risk and technical debt.

Validating Model Performance for Critical Workflows

For companies with existing AI integrations in sensitive areas like data extraction, legal document review, or medical research assistance, OpenMark serves as a validation suite. Teams can regularly benchmark their current model against new alternatives to ensure they are still using the most effective and cost-efficient option, or to test the impact of model updates on their specific outputs.

Optimizing Agentic or Multi-Step AI Systems

When designing complex AI agents that involve routing, classification, or chaining multiple LLM calls, choosing the right model for each step is vital. Engineers can use OpenMark to benchmark subtasks—like intent classification or query reformulation—to find specialized models that improve overall system performance and reliability while controlling cascading costs.

Academic and Industrial AI Research

Researchers and analysts focused on LLM capabilities can utilize OpenMark's structured testing environment to conduct comparative studies. The platform's ability to run consistent prompts across many models and measure variance provides robust, reproducible data for analyzing model strengths, weaknesses, and evolution across different task types and difficulty levels.

Overview

About Mod

In the modern landscape of software as a service (SaaS), the user interface is not merely a layer of presentation; it is the primary conduit for user experience, engagement, and value perception. Mod emerges as a definitive solution to the perennial challenge of crafting beautiful, functional, and consistent UIs without the prohibitive costs of custom design or the fragility of piecing together disparate libraries. It is a comprehensive CSS framework meticulously engineered for building SaaS applications. Designed for developers of all stripes—from solo founders and indie hackers to product teams within established enterprises—Mod provides a robust, production-ready design system out of the box. Its core value proposition is profound acceleration. By offering a vast, cohesive collection of pre-styled components and utilities, Mod eliminates countless hours of design decision-making, CSS debugging, and cross-browser compatibility testing. This allows developers to focus their intellectual energy on core application logic, unique features, and business innovation, dramatically shortening the path from concept to a polished, professional product. Its framework-agnostic philosophy ensures this velocity is not sacrificed regardless of your chosen technology stack, making it a versatile and enduring tool in any developer's arsenal.

About OpenMark AI

OpenMark AI is a sophisticated, web-based platform designed to revolutionize how developers and product teams select and validate large language models (LLMs) for their specific applications. It moves beyond theoretical benchmarks and marketing claims by enabling task-level, real-world performance testing. The core premise is simple yet powerful: users describe their exact task in plain language, and OpenMark AI executes that prompt against a vast catalog of over 100 models in a single, unified session. This process generates comprehensive, side-by-side comparisons based on actual API calls, measuring critical metrics like scored output quality, cost per request, latency, and—crucially—output stability across multiple runs. By revealing variance and consistency, not just a single "lucky" output, OpenMark provides the empirical data needed to make informed, cost-efficient decisions before shipping an AI feature. It eliminates the logistical headache of managing multiple API keys and configurations, offering a hosted, credit-based system that grants immediate access to models from leading providers like OpenAI, Anthropic, and Google. Ultimately, OpenMark AI is built for professionals who prioritize finding the optimal balance between performance, reliability, and operational cost for their unique use case.

Frequently Asked Questions

Mod FAQ

What makes Mod different from other CSS frameworks like Tailwind or Bootstrap?

While frameworks like Tailwind provide low-level utility classes and Bootstrap offers a component library, Mod is specifically architected for the SaaS domain. It combines the best of both: a comprehensive, high-quality component library (like Bootstrap) that is built upon a consistent, customizable design system with utility classes (conceptually like Tailwind). The key differentiators are its SaaS-focused component selection, its built-in light/dark theme system, its extensive icon suite, and its framework-agnostic purity, offering a more opinionated and complete starting point for business applications.

Is Mod suitable for completely custom designs, or will my app look generic?

Mod is designed as a foundation for custom design, not a constraint. The included themes are professional and polished starting points. However, the entire system is built with customization in mind. Through CSS custom properties (variables) and a well-organized structure, you can easily modify colors, typography, spacing, and component specifics to create a unique brand identity. It provides the consistency and speed of a framework while leaving the door open for deep customization, preventing a "generic" look.

How does the framework-agnostic approach work in practice?

Mod is distributed as standard CSS. You can include it via a CDN link, import it into your main JavaScript/TypeScript file, or install it as an npm package and import the CSS. Since it uses plain CSS class names (e.g., .btn, .card, .grid), it works with any technology that can render HTML and apply CSS classes. There are no framework-specific dependencies, JavaScript bindings, or proprietary syntax to learn, ensuring compatibility with your current and future tech stack.

What is included in the "yearly updates" mentioned?

The yearly updates refer to a commitment to ongoing development and maintenance of the Mod library. Purchasers can expect regular improvements, which typically include new components, additional style variants, enhancements to existing components for accessibility or browser support, updates to the icon library, and adaptations to follow modern web design trends. This ensures that applications built with Mod remain contemporary and leverage the latest best practices in front-end development without requiring manual overhaul.

OpenMark AI FAQ

How does OpenMark AI calculate the quality score for model outputs?

OpenMark AI employs a sophisticated, automated evaluation system that scores model outputs based on their adherence to your task's instructions and desired outcome. While the exact methodology is proprietary, it typically involves a combination of metrics that may include semantic similarity, keyword presence, factual accuracy checks (where applicable), and structured format compliance. This provides a quantitative measure of how "correct" or suitable each model's response is for your specific benchmark.

Do I need API keys for OpenAI, Anthropic, or other model providers?

No, you do not need to provide or configure any external API keys. OpenMark AI operates on a credit-based system. You purchase credits through the platform, and these credits are used to pay for the underlying API calls when you run benchmarks. This hosted approach simplifies access, manages rate limits, and provides a single, unified cost structure for testing across the entire model catalog.

What is the difference between a "task" and a "benchmark" in OpenMark?

A "Task" is your defined objective—the instructions and any example inputs you create in plain language. A "Benchmark" is the execution of that task. When you run a benchmark, you select which models to test against your task, configure the number of repeat runs for stability analysis, and launch the job. The benchmark results then show how each model performed on that specific task.

Can I use OpenMark to test private or fine-tuned models?

Currently, OpenMark AI focuses on providing access to its extensive catalog of publicly available, state-of-the-art models from major providers. The platform is designed for comparative benchmarking of these off-the-shelf models. Support for testing privately hosted or custom fine-tuned models is not a standard feature, as the platform's value lies in its managed, unified access to a wide array of pre-existing models for direct comparison.