Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

Hostim.dev

Hostim.dev simplifies Docker app deployment with built-in databases and secure, GDPR-compliant hosting in minutes.

Last updated: March 1, 2026

OpenMark AI instantly benchmarks over 100 AI models on your specific task to find the optimal balance of cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

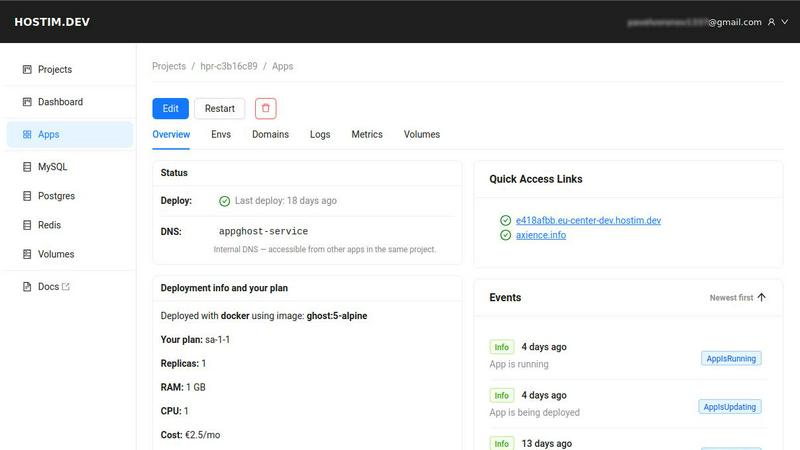

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Seamless Deployment

Hostim.dev enables rapid deployment of applications from Docker images, Git repositories, or Docker Compose files. This feature allows developers to get their projects up and running in minutes, removing the need for complex configurations or extensive DevOps knowledge.

Built-in Managed Databases

With Hostim.dev, users gain instant access to managed databases such as MySQL and PostgreSQL, along with Redis and storage volumes. This pre-wired setup means developers can focus on application development rather than the intricacies of database management, streamlining the deployment process significantly.

Project Isolation and Security

Each project on Hostim.dev operates within its own isolated Kubernetes namespace, ensuring that applications remain secure and organized. This feature promotes a clean environment for development and helps protect sensitive data, providing developers with added peace of mind.

Transparent Pricing Model

Hostim.dev features a clear, flat pricing model that starts at just €2.50 per month. This transparent billing system allows developers to track costs on a per-project basis, eliminating unexpected charges and enabling straightforward budgeting for projects.

OpenMark AI

Plain Language Task Benchmarking

OpenMark AI removes the barrier of technical complexity by allowing users to define their test scenarios using simple, descriptive language. You don't need to write complex scripts or structured prompts; you just describe what you want the AI to do, such as "extract dates and product names from customer service emails" or "generate three taglines for a new productivity app." The platform intelligently configures the benchmark, enabling rapid, iterative testing of your actual workflow without any coding required.

Multi-Model Comparison in One Session

The platform's core strength is its ability to run your described task against a massive selection of LLMs simultaneously. Instead of manually testing models one by one across different interfaces and dashboards, you launch a single benchmark job. OpenMark AI coordinates real API calls to all selected models, presenting the results in a unified dashboard for immediate, apples-to-apples comparison across quality scores, cost, and speed.

Variance and Stability Analysis

OpenMark AI provides deep insight into model reliability by running your task multiple times per model. This feature measures output consistency, showing you the variance in responses. It answers the critical question: "Will this model perform consistently when deployed at scale?" This focus on stability, beyond a single output, helps identify models that are robust and dependable versus those that are unpredictable.

Integrated Cost-Per-Request Calculation

Every benchmark includes precise, real-time calculation of the cost incurred for each API call to each model. This goes beyond listed token prices, showing you the actual expense of achieving a certain quality level for your specific task. This allows for true cost-efficiency analysis, helping you select a model that delivers the required performance at a sustainable operational cost, optimizing your AI budget effectively.

Use Cases

Hostim.dev

Freelance Development

Freelancers can leverage Hostim.dev to deploy applications quickly and efficiently for their clients. With per-project billing and easy handover features, developers can demonstrate their work without the need for complex server management, ensuring a smooth transition for clients.

Agency Projects

Agencies often juggle multiple client projects, and Hostim.dev helps in isolating these projects effectively. By providing clear cost breakdowns and ensuring that each client has a dedicated environment, agencies can manage resources efficiently while maintaining control over costs.

Educational Purposes

Students and learners can benefit from Hostim.dev by using real infrastructure for their projects. With a free trial and student credits available, learners can deploy applications, gain hands-on experience, and create portfolios showcasing their skills without financial barriers.

Rapid Prototyping

Startups and developers can utilize Hostim.dev for rapid prototyping of their application ideas. The platform's ability to deploy quickly and manage essential services allows teams to iterate and pivot their projects efficiently, bringing ideas to market faster.

OpenMark AI

Pre-Deployment Model Selection for New Features

Development teams building a new AI-powered feature, such as a content summarizer or a customer support chatbot, can use OpenMark to empirically determine the best foundational model. By benchmarking prototypes of their exact task, they can select the optimal model based on a combination of accuracy, response time, and cost before committing to an integration, reducing risk and technical debt.

Validating Model Performance for Critical Workflows

For companies with existing AI integrations in sensitive areas like data extraction, legal document review, or medical research assistance, OpenMark serves as a validation suite. Teams can regularly benchmark their current model against new alternatives to ensure they are still using the most effective and cost-efficient option, or to test the impact of model updates on their specific outputs.

Optimizing Agentic or Multi-Step AI Systems

When designing complex AI agents that involve routing, classification, or chaining multiple LLM calls, choosing the right model for each step is vital. Engineers can use OpenMark to benchmark subtasks—like intent classification or query reformulation—to find specialized models that improve overall system performance and reliability while controlling cascading costs.

Academic and Industrial AI Research

Researchers and analysts focused on LLM capabilities can utilize OpenMark's structured testing environment to conduct comparative studies. The platform's ability to run consistent prompts across many models and measure variance provides robust, reproducible data for analyzing model strengths, weaknesses, and evolution across different task types and difficulty levels.

Overview

About Hostim.dev

Hostim.dev is a groundbreaking bare-metal Platform-as-a-Service (PaaS) solution designed specifically for developers seeking to deploy containerized applications with speed and efficiency. By simplifying the complexities often associated with DevOps tasks, this platform enables developers to focus on building and innovating rather than managing infrastructure. Hostim.dev allows for seamless deployment from various sources, including Docker images, Git repositories, and full Docker Compose files, all within a matter of minutes. This flexibility makes it a valuable asset to both experienced developers and those new to the field. The platform automatically provisions critical services such as MySQL, PostgreSQL, and Redis, as well as persistent storage volumes, providing users with all essential resources at their disposal. Each project enjoys its own isolated Kubernetes namespace, enhancing security and organization. With a transparent hourly billing system, developers can predict costs effectively. Additionally, Hostim.dev is hosted in Germany, ensuring GDPR compliance and peace of mind regarding data privacy. Whether you are a freelancer, a startup, or part of a larger agency, Hostim.dev offers a robust, intuitive environment that empowers you to bring your ideas to fruition without the burden of managing underlying infrastructure.

About OpenMark AI

OpenMark AI is a sophisticated, web-based platform designed to revolutionize how developers and product teams select and validate large language models (LLMs) for their specific applications. It moves beyond theoretical benchmarks and marketing claims by enabling task-level, real-world performance testing. The core premise is simple yet powerful: users describe their exact task in plain language, and OpenMark AI executes that prompt against a vast catalog of over 100 models in a single, unified session. This process generates comprehensive, side-by-side comparisons based on actual API calls, measuring critical metrics like scored output quality, cost per request, latency, and—crucially—output stability across multiple runs. By revealing variance and consistency, not just a single "lucky" output, OpenMark provides the empirical data needed to make informed, cost-efficient decisions before shipping an AI feature. It eliminates the logistical headache of managing multiple API keys and configurations, offering a hosted, credit-based system that grants immediate access to models from leading providers like OpenAI, Anthropic, and Google. Ultimately, OpenMark AI is built for professionals who prioritize finding the optimal balance between performance, reliability, and operational cost for their unique use case.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

The free tier of Hostim.dev includes a 5-day trial with no signup required. This allows users to explore the platform's features, deploy applications, and test the environment without any financial commitment.

Can I deploy with just a Compose file?

Yes, you can deploy applications using just a Docker Compose file on Hostim.dev. This feature simplifies the deployment process, enabling users to go live in minutes without needing extensive DevOps expertise.

Where is my app hosted?

Your applications are hosted on bare-metal servers located in Germany, ensuring compliance with GDPR regulations. This means that data privacy is prioritized, making it an ideal choice for developers concerned about data protection.

Do I need to know Kubernetes?

No, you do not need to have prior knowledge of Kubernetes to use Hostim.dev. The platform abstracts the complexities of Kubernetes management, allowing developers to focus on application development without needing to understand the underlying infrastructure.

OpenMark AI FAQ

How does OpenMark AI calculate the quality score for model outputs?

OpenMark AI employs a sophisticated, automated evaluation system that scores model outputs based on their adherence to your task's instructions and desired outcome. While the exact methodology is proprietary, it typically involves a combination of metrics that may include semantic similarity, keyword presence, factual accuracy checks (where applicable), and structured format compliance. This provides a quantitative measure of how "correct" or suitable each model's response is for your specific benchmark.

Do I need API keys for OpenAI, Anthropic, or other model providers?

No, you do not need to provide or configure any external API keys. OpenMark AI operates on a credit-based system. You purchase credits through the platform, and these credits are used to pay for the underlying API calls when you run benchmarks. This hosted approach simplifies access, manages rate limits, and provides a single, unified cost structure for testing across the entire model catalog.

What is the difference between a "task" and a "benchmark" in OpenMark?

A "Task" is your defined objective—the instructions and any example inputs you create in plain language. A "Benchmark" is the execution of that task. When you run a benchmark, you select which models to test against your task, configure the number of repeat runs for stability analysis, and launch the job. The benchmark results then show how each model performed on that specific task.

Can I use OpenMark to test private or fine-tuned models?

Currently, OpenMark AI focuses on providing access to its extensive catalog of publicly available, state-of-the-art models from major providers. The platform is designed for comparative benchmarking of these off-the-shelf models. Support for testing privately hosted or custom fine-tuned models is not a standard feature, as the platform's value lies in its managed, unified access to a wide array of pre-existing models for direct comparison.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a cutting-edge Platform-as-a-Service (PaaS) solution designed specifically for developers aiming to deploy containerized applications effortlessly. By removing the complexities traditionally associated with DevOps, Hostim.dev enables users to launch applications from Docker images or Git repositories swiftly. This user-friendly platform is especially appealing to freelancers, startups, and agencies seeking to focus on application development rather than infrastructure management. Users often seek alternatives to Hostim.dev for various reasons, including pricing structures, specific feature sets, or unique platform requirements that better align with their projects. When exploring alternatives, it's essential to consider factors such as deployment speed, ease of use, database management options, security features, and compliance with data privacy regulations. A thorough understanding of these aspects will help users find a solution that meets their specific needs while maintaining efficiency and reliability.

OpenMark AI Alternatives

OpenMark AI is a specialized developer tool designed for task-level benchmarking of large language models. It allows teams to run real prompts against a wide catalog of LLMs in a single session, comparing critical metrics like cost, latency, quality, and output stability to inform pre-deployment decisions. Users may explore alternatives for various reasons, such as differing budget constraints, the need for on-premise deployment, or a requirement for more granular technical controls beyond hosted benchmarking. Some may seek tools integrated directly into their CI/CD pipeline or those offering different model access or pricing structures. When evaluating other solutions, key considerations include the scope of supported models, the authenticity of performance data, the depth of analysis on cost versus quality, and the overall workflow efficiency. The ideal tool should provide transparent, actionable insights that align with your specific development stage and operational requirements.