HookMesh vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

HookMesh provides reliable webhook delivery and a self-service portal to streamline your SaaS operations effortlessly.

Last updated: February 28, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

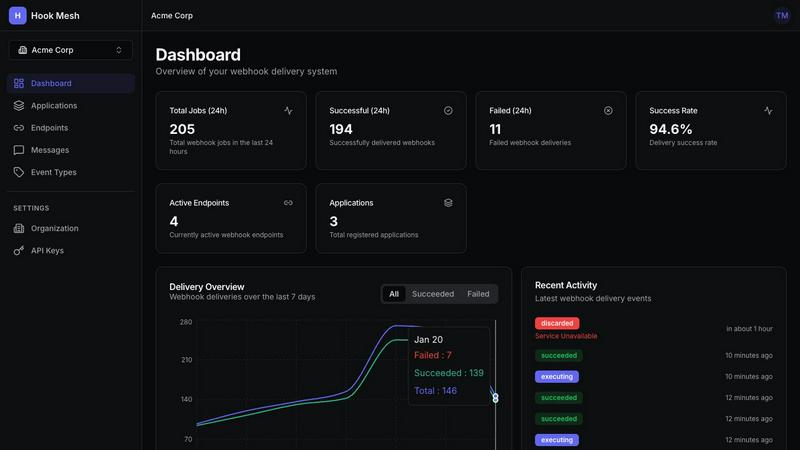

HookMesh

OpenMark AI

Overview

About HookMesh

HookMesh is a comprehensive, developer-first platform engineered to solve the universal challenge of reliable webhook delivery for modern software-as-a-service (SaaS) products and digital platforms. At its core, HookMesh provides a battle-tested, managed infrastructure that abstracts away the immense technical complexity and operational overhead associated with building and maintaining an in-house webhook system. It is designed for development teams, product managers, and engineering leaders who need to provide real-time event notifications to their customers but wish to avoid the months of engineering effort required to implement robust retry logic, circuit breakers, and monitoring tools. The platform's primary value proposition is delivering unparalleled peace of mind by guaranteeing that critical business events—such as payment confirmations, data sync triggers, or user activity alerts—are delivered consistently and reliably. By handling the entire delivery lifecycle, from automatic retries with exponential backoff to providing customers with a self-service portal for endpoint management, HookMesh allows businesses to focus their resources on core product innovation rather than the undifferentiated heavy lifting of message queue management and failure debugging.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.